Artificial intelligence (AI) is reshaping how systematic reviews are conducted, opening up new opportunities to reduce manual workload and accelerate evidence production. Many tools promise dramatic gains in speed. However, speed alone is a misleading measure of success if it comes at the expense of transparency, reproducibility or trust in the evidence produced.

When automation is used, you remain responsible for producing evidence that is transparent, reproducible and trustworthy. For many reviewers and librarians, this responsibility also includes being able to justify tool choices within institutional governance, procurement requirements and peer-review expectations.

At Covidence, we design AI features to support you in meeting these responsibilities. Our role is not to replace methodological judgement, but to make it easier to apply it with confidence.

Each AI feature is validated at the task level, with performance aligned to the consequences of error, data protections in place and limitations made explicit. This article sets out the four principles that guide Covidence’s approach to responsible automation. Our approach is aligned with the 2025 Cochrane Position Statement on AI and the RAISE (Responsible AI in Systematic Evidence Synthesis) guidelines.

Responsible Automation Principles

1. Validated automation with human oversight

We vary the level of automation and human oversight based on the task being performed and the cost of an error for your review. Different automation patterns are applied depending on methodological risk and whether a feature can be used safely and reliably in real-world review settings.

Rather than relying solely on laboratory-style benchmarks, we prioritise automation that has been validated under realistic conditions, including diverse review teams, varying levels of methodological experience, and the practical constraints of live projects. Features are only released when they can be used confidently without requiring specialist AI or technical expertise, while keeping reviewers in control of all final decisions.

Validated exclusion (RCT classifier): For tasks such as identifying randomised controlled trials, missing a relevant study is a critical failure. Our RCT classifier is rigorously evaluated to achieve greater than 99% recall. At this level of performance, automated exclusion of non-randomised studies can be used with confidence, reducing manual screening burden while protecting review integrity.

Decision support (extraction and sorting): For tasks requiring nuanced judgement, such as data extraction or relevance prioritisation, AI is used as a decision-support tool. The system suggests values or reorders records to speed up work, but final verification and acceptance always remain with the reviewer.

2. Task specific performance metrics

Each AI feature is tuned based on the task it supports and the cost of error for your review. Different tasks require different performance trade-offs.

Prioritizing recall (screening): For screening tasks, failing to identify relevant studies carries a high methodological cost. Classifiers are therefore calibrated for very high sensitivity, even if that means reviewers may see some irrelevant records.

Prioritizing precision (extraction): For tasks like suggesting study characteristics, we prioritize precision. The goal is to provide accurate, high-quality suggestions that save you typing time, rather than overwhelming you with low-confidence guesses.

3. Data stewardship and institutional safeguards

AI features are designed so you can benefit from automation without losing control of your data or exposing work in progress.

Private data: Unpublished data, extracted results and proprietary comments are not used to train public-facing AI models without explicit consent.

Security standards: All AI features operate under the same enterprise-grade security and compliance standards as the rest of the Covidence platform.

4. Explainability, traceability and reporting

AI features are designed to make their behaviour visible and their use easy to explain, justify and report.

Validation transparency: We publish model performance metrics, such as sensitivity and specificity, so reviewers can assess whether a feature is fit for purpose in their specific context.

Reporting support: Templates and guidance are provided to help reviewers document AI use in protocols, PRISMA flow diagrams and methods sections, supporting compliance with peer-review and reporting standards.

Deliberate restraint by design

These principles underpin a growing set of AI features available in Covidence today. Each feature is designed, validated and documented so you can understand how it saves you time, when it is appropriate to use, how it performs and how its use can be transparently documented in protocols, publications and audit contexts.

Alongside this, we invest heavily in research and development, exploring a wide range of cutting edge AI approaches. Only a subset of this work is released into Covidence, by design.

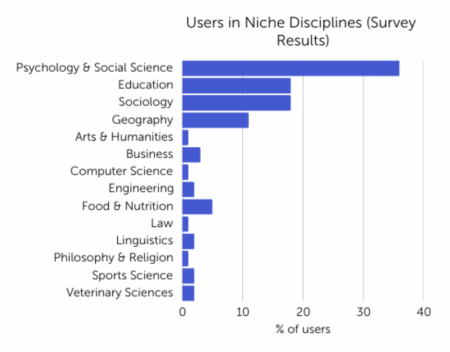

As a trusted tool provider used by more than 450 universities, societies and hospitals, we take our responsibility seriously and introduce new automation only when it meets the standards required for publications and institutionally defensible evidence.