At Covidence, we invest heavily in research and development, exploring a wide range of automation (AI) approaches for evidence synthesis. Only a subset of that work is released into the product. That gap is deliberate.

In our previous article on responsible automation, we outlined the principles that guide our approach. To see how these principles operate in practice, let’s walk through some recent automation work within screening.

The ambition & the bar we set for screening automation

We started with a question:

Could we meaningfully reduce the screening stage of a review, without compromising its integrity?

Manual screening is where review teams spend enormous effort. If automation was going to meaningfully matter, we didn’t want to just “assist” screening; we aimed to remove the real workload. That led us to exploring excluding clearly irrelevant studies before manual screening began.

Screening is one of the highest-stakes stages of a systematic review workflow. Exclude a relevant study, and you can change the conclusions of the entire review. So we made a deliberate choice. If automation was going to remove studies upstream, it couldn’t require reviewers to double-check every exclusion. That would simply shift the burden from screening to verification. Instead, we designed a workflow for light-touch oversight i.e. reviewers have full visibility of automated exclusions, and if needed, the ability to reverse those decisions.

Given the level of human oversight required, it was not enough for the model to perform well “on average.” Human reviewers themselves align on screening decisions only around 80% of the time, reflecting the inherent ambiguity of eligibility criteria. If we were going to remove studies upstream, the system needed not merely to approximate typical human performance, but to exceed it – and to do so consistently across diverse review topics, varying eligibility criteria, and real-world data variability.

In other words: the question wasn’t “Can we build this?”. It was “Can we build this safely, at scale?”

We explored more than one way to meet that standard.

What choosing NOT to release looks like

Our first approach initially looked like a breakthrough. We utilised a Large Language Model to interpret a review’s inclusion and exclusion criteria and automatically excluded references that were clearly irrelevant before manual screening began. In development, the results were compelling. The model achieved 98.6 percent recall and was estimated to reduce manual screening workload by around 18 percent. On average, performance looked extremely strong.

We then made the feature available to a group of beta participants to observe how it behaved under real-world review conditions. Average recall was 98 percent. On paper, that is still high performance. But averages can hide risk.

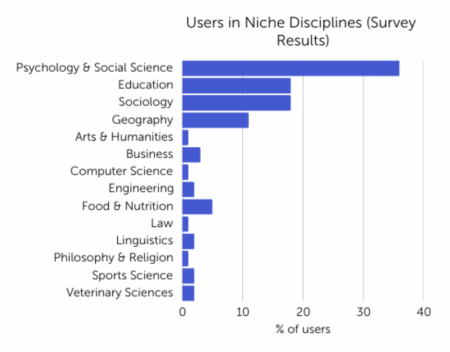

When we examined the distribution more closely, we saw something we could not ignore. In some reviews, recall fell as low as 66 percent. That means one in three relevant studies could be missed. We could not reliably predict which reviews would experience that drop. Performance depended heavily on how eligibility criteria were written, and in practice, those criteria vary widely in clarity, structure, discipline and domain. That variability introduced behaviour we could not confidently control at scale.

At that point, the decision became clear. While the model performed well on average and would have delivered meaningful time savings, its performance was not consistent across the diversity of review types, subject areas, writing styles and reviewer experience levels represented in our user base. Supporting that diversity is not optional; it is fundamental to the platform. In a high-stakes workflow like evidence synthesis “usually works” is not good enough. We chose not to release the model.

That decision did not mean we stepped away from reducing workload in screening. It became part of our Research and Development (R&D) portfolio: clarifying the constraints we would need to solve before any upstream exclusions could be deployed safely at scale. That learning informed the next approach we explored.

What choosing to release looks like

Instead of interpreting eligibility criteria for each review, we focused on a defined classification problem: identifying whether a study potentially reports a randomised controlled trial.

The Cochrane RCT classifier, developed by the EPPI Centre, had already been independently evaluated at greater than 99 percent recall, with consistent performance across diverse review contexts. Integrated into Covidence, it enables the automatic exclusion of studies unlikely to be RCTs before manual screening begins.

The difference from our first approach was not the intent, but the stability of the signal. The classifier operates on more standardised inputs and can be validated independently of individual review wording. Its behaviour is predictable at scale, and its error profile is well characterised.

Importantly, we also restrict its use. Because the classifier was trained on biomedical literature, within Covidence it is available only for medical and healthcare reviews. We do not extend it into domains where the training data does not support reliable performance. Defining where a model should not be used is as important as defining where it should.

Within Covidence, exclusions are transparent and reversible. Reviewers can inspect decisions, restore studies to screening, and the PRISMA flow diagram updates automatically to document the automated step. The validation data is published and citable, and reporting templates are available to support clear methods reporting.

In this case, the evidence supported release. The task was tightly bounded, the performance consistent and the safeguards clear.

What this revealed

Working through these approaches clarified something important for us. Automation is not a binary question of “does it work?” It is a question of whether it can reduce workload without compromising accuracy or trust.

Over time, our release decisions crystallised into a decision matrix built around three connected questions:

- What is at stake if the automation is wrong?

- How consistently does it perform under real-world conditions?

- Do reviewers retain sufficient oversight and control?

These are not independent gates. It is their interaction that determines whether an approach is ready for release.

This is the second article in Covidence’s six-part series on AI:

- Part 1: Covidence’s approach to responsible automation (AI)

- Part 2: How Covidence decides what AI to release (and what to hold back)

- Coming next: A decision matrix to guide the responsible use of AI in evidence synthesis